Digital Services Act: HateAid fordert #PowerToTheUsers

Warum das neue Plattform-Gesetz der EU (Digital Services Act) mitgestalten?

Es ist schon einige Zeit her, da hatte jemand ein Meme gebastelt, darauf zu sehen die Grünenpolitikerin Renate Künast und das dramatische Falschzitat „Integration fängt damit an, dass Sie als Deutscher mal Türkisch lernen.“

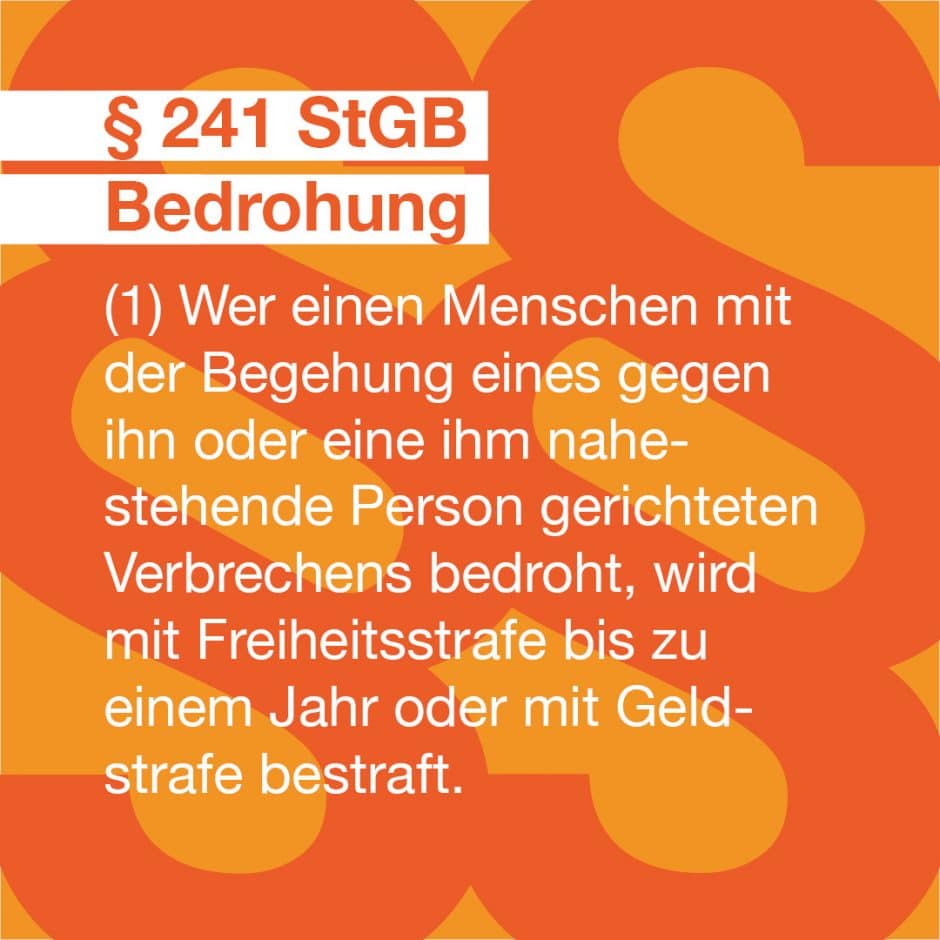

Dieses Meme verbreitete sich schnell über verschiedene (auch Fake-)Accounts, unter anderem auf Facebook. Darauf folgten auf ihrem Profil heftigste Reaktionen, auch solche, die schnell beängstigen konnten – etwa Morddrohungen von Rechten oder die Aufforderung zur Vergewaltigung.

Gegen die Untätigkeit der sozialen Plattform ging sie mit HateAid vor und klagte schließlich im April 2021. Sollte sie erfolgreich sein, wird sich das auf alle User*innen auswirken, die möchten, dass solche Falsch-Postings schnell und überall gelöscht werden. Um Fälle wie diese geht es auch im sogenannten „Digital Services Act“ (DSA), der ab Juni 2021 konkreter verhandelt wird. Die neuen Gesetze sollen idealerweise dafür sorgen, Plattformen wie Facebook, TikTok, Twitter, Instagram und Co. für dich sicherer zu machen und dir mehr Service ermöglichen, gerade in Notfällen wie digitaler Gewalt und Hass.

Denn es kann jede*n treffen und viele trifft es völlig unerwartet. Wenn du selbst Hassnachrichten, Gewalt-Kommentare oder Rufschädigungen melden möchtest, fängt das Problem erst an: Du markierst Täter*innen-Profile und meldest Postings. Und dann … hörst du nichts mehr vom Support. Postings und Kommentare bleiben, deine Hilfslosigkeit, Scham und Angst vor den aggressiven unbekannten Täter*innen werden jeden Tag schlimmer.

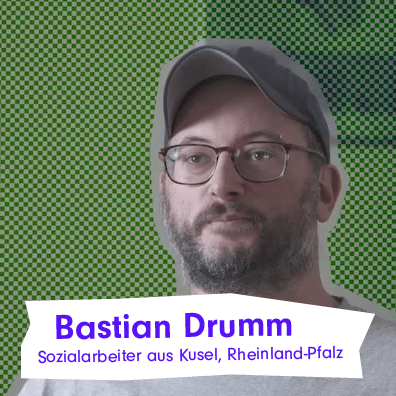

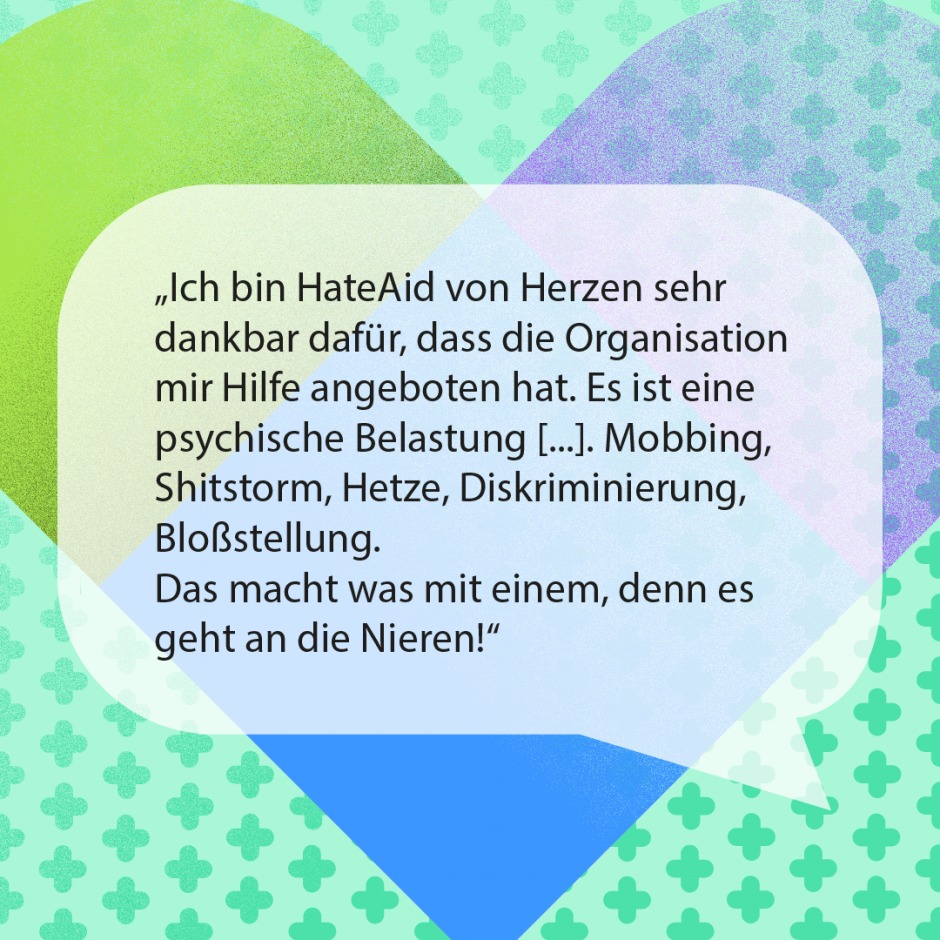

Digitale Gewalt hat viele Gesichter – wir helfen Betroffenen bis vor die höchsten Gerichte

Mehr Sicherheit im deutschen Netz – und in der EU. Das wollen wir erreichen

Wir fordern:

- Mehr Sicherheit und Support: Beispielsweise durch einfachen und verständlichen Zugang zum Plattformsupport, schnelle Meldemöglichkeiten, Prüfung und Löschung von illegalen Inhalten. User-Supportstellen sind 24/7 erreichbar im Notfall.

- Klare Rechte für dich: Wenn dir der Plattformsupport einmal nicht weiterhelfen kann, muss klar sein, wie du deine Rechte in Deutschland durchsetzen kannst. Statt eine Adresse im Ausland zu suchen, eine andere Sprache zu lernen oder direkt Jura zu studieren, weißt du genau, an wen du dich wenden kannst und wie du sicher Hilfe findest.

- Transparenz: Alle Nutzer*innen von Plattformen wie Google, Facebook, TikTok, Twitter und weiteren Services sollen einfach und übersichtlich erkennen können, was ihre Rechte, Möglichkeiten aber auch Grenzen der Nutzung sind. Entscheidungen der Plattformen sollen begründet werden, damit sie nachvollziehbar sind.

- Verantwortung der Länder: Es muss Klarheit darüber herrschen, welches Länderrecht Anwendung findet, wenn dir im Netz etwas passiert und wie dir in Deutschland geholfen wird – ebenso in allen anderen EU-Ländern.

Unser ausführliches Statement zum geplanten DSA findest du auf Englisch hier.

Was du für Sicherheit im Netz tun kannst

Wenn du dich dafür einsetzen willst, Hass, Gewalt und Intransparenz im Netz zu bekämpfen: Werde Teil unserer Kampagne für einen Digital Services Act, der auf bestmögliche Art und Weise für die Interessen einer demokratischen Gesellschaft und für ein Netz ohne Hass einsteht – und dich im Netz besser schützt. Unterzeichne JETZT unsere Petition an die EU und unterstütze unseren Einsatz für ein sicheres Internet!

Alle vertieften Informationen zum Digital Services Act in Deutschland und Europa

Was ist der Digital Services Act im Detail und wie funktioniert er?

Der Digital Services Act (deutsch: Gesetz über digitale Dienste) ist ein Entwurf einer Verordnung der Europäischen Kommission, der am 15. Dezember 2020 – bisher nur in englischer Sprache – vorgelegt wurde. Der vollständige Titel lautet: „Proposal for a Regulation of the European Parliament and of the Council on a Single Market For Digital Services (Digital Services Act) and amending Directive 2000/31/EC“ (deutsch etwa: „Entwurf für eine Verordnung des Europäischen Parlaments und des Rates für einen europäischen Binnenmarkt der digitalen Dienste und zur Änderung der Richtlinie 2000/31/EG“).

Was ist neu? Was sind die Schwerpunkte des DSA?

Der neue Digital Services Act soll nunmehr – so die Website der Europäischen Kommission – mehr Sicherheit und Verantwortung im Online-Umfeld bieten. Weiter heißt es dort: „Erstmals eröffnet ein einheitliches Regelwerk zu Pflichten und Verantwortlichkeiten von Vermittler[*inne]n binnenmarktweit neue Möglichkeiten, digitale Dienste länderübergreifend anzubieten — bei hohem Schutzniveau für alle Nutzer[*]innen, unabhängig davon, wo in der EU sie leben.“

Die Schwerpunkte des Digital Services Act in Deutschland und der EU

Der Digital Services Act gilt zunächst für Vermittlungsdienste wie Internetanbieter und Domänennamen-Registrierstellen, für Hosting-Dienste wie Cloud- und Webhosting-Dienste, sowie für Online-Plattformen wie Online-Marktplätze, App-Stores und Social-Media-Plattformen.

Was hat HateAid mit dem Digital Services Act zu tun und kommt jetzt Overblocking?

Der Fokus von HateAid liegt vor allem auf sozialen Netzwerken und den Rechten von Nutzer*innen gegenüber diesen. Wir setzen uns dafür ein, dass Nutzer*innen den übermächtigen Netzwerken vor allem bei der Content-Moderation nicht mehr machtlos gegenüberstehen. Dies erfordert klar geregelte und leicht zugängliche Prozesse zum Umgang mit illegalem Content und transparente Entscheidungsprozesse, die einer Überprüfung zugänglich sind. Bisher ist das Gegenteil der Fall: Nutzer*innen können etwa Löschentscheidungen und vor allem “Nichtlöschentscheidungen” kaum nachvollziehen, sie erscheinen oft willkürlich. Betroffene werden mit knappen Textbausteinen abgespeist und haben kaum eine rechtliche Handhabe, derartige Entscheidungen anzufechten.

Aus der Sicht von HateAid ist am Entwurf des Digital Services Act der starke Fokus auf Maßnahmen gegen missbräuchliche Meldungen und gegen möglicherweise unberechtigte Löschung von Inhalten in Sozialen Medien problematisch: Nach dem Willen der Europäischen Kommission soll mit den entsprechenden Regelungen einer Beschränkung der Meinungsfreiheit der Nutzer*innen vorgebeugt werden. Eine solche Beschränkung könnte dadurch entstehen, dass bestimmte Postings systematisch von anderen Nutzer*innen gemeldet und daraufhin gelöscht werden, ohne dass es tatsächlich Grund zur Löschung gibt (sog. Overblocking). Demgegenüber berücksichtigt der Entwurf jedoch zu wenig den Ruf nach Konsequenzen für die Social-Media-Anbieter bei Underblocking, wenn also berechtigte Interessen von Nutzer*innen an der Löschung bestimmter Beiträge ignoriert werden.

Für wen gilt der Digital Services Act?

Zusätzliche Anforderungen gelten nach dem Digital Services Act für sehr große Online-Plattformen, die mehr als 10 % der 450 Millionen Verbraucher*innen in Europa erreichen. Da Plattformen dieser Größe besondere Risiken für die Verbreitung illegaler Inhalte und für Schäden in der Gesellschaft bergen, gelten für sie weitere strenge Regeln, wie etwa Risikomanagement-Pflichten und Benennung von Compliance-Beauftragten, externe Risikoprüfungen und öffentliche Rechenschaftspflicht, Transparenz der Empfehlungssysteme und Wahlmöglichkeiten für Nutzer*innen beim Zugriff auf Informationen (um der „Meinungs-Bubble“ entgegenzuwirken), Datenaustausch und Kooperation mit Behörden und Forschungsinstituten).

Zuständig pro Land sind sogenannte „Digital Services Coordinators“ (deutsch etwa: „Koordinatoren für digitale Dienste“). Offen bleibt derzeit die Frage, wie einzelne EU-Staaten, in denen besonders viele Tech-Firmen ihren Sitz haben, ihren neuen und erweiterten Verantwortlichkeiten gerecht werden können. So haben u. a. Google und Facebook ihren offiziellen europäischen Sitz in der Republik Irland. Dadurch kommt dem irischen „Digital Services Coordinator“ eine besonders große Verantwortung zu und auch die irischen Gerichte könnten sich bei Verstößen der Firmen gegen den Digital Services Act einer Vielzahl zusätzlicher Fälle ausgesetzt sehen.

In Deutschland gibt es mit dem Netzwerkdurchsetzungsgesetz (NetzDG) ein Regelwerk, das viele der Herausforderungen, die sich durch Soziale Medien ergeben, adressiert.

Das neue Gesetz wird weitreichende Veränderungen in der Gesellschaft bedeuten, ähnlich der DSGVO

Die Reichweite des Digital Services Act kann man anhand der durchschlagenden Wirkung der Datenschutzgrundverordnung (DSGVO / General Data Protection Regulation (GDPR)) erahnen, die seit 2018 EU-weit die Datenschutzanforderungen auf ein Niveau anhebt, das sich an dem orientiert, das zuvor durch das Bundesdatenschutzgesetz in Deutschland galt.